If we don’t win, you don’t pay.

NO WIN – NO FEE

ON CALL 24/7

U.S. Marine

Are you seeking answers after a bad car accident in Greater Los Angeles, CA? You are entitled to be compensated for traffic collisions you didn’t cause! So, is your gut instinct still telling you to hire a local car accident law firm? Hiring a professional motor vehicle accident attorney can help get you back on your feet after being involved in a contentious auto accident in Los Angeles County, CA, but who can you trust? Our serious injury car accident lawyers are ready to assist you and your loved ones because we know you have to receive the MAXIMUM COMPENSATION after Los Angeles car accidents.

Why Hire a Los Angeles Car Accident Attorney?

You may wish to handle your Los Angeles motoring accident claims alone. However, a qualified Los Angeles car crash lawyer can swiftly help you with all common car accident injuries. Ehline has a proven staff of award-winning Los Angeles car accident lawyers with over 30 years of combined experience. So you have to know we can help you hold all the negligent parties liable for causing your damages to get the most financial compensation. Our Southern California Super Lawyers have the know-how to guide you expertly. We will hold your hand through the mental anguish of the liability insurance car accident injury claims process. We also specialize in “accident” death claims from when the government shuts it all down.

Leadership and Convenience

Taking charge and having local offices is essential and step one. Our top auto accident lawyers will explain everything step by step in detail at your chosen place to help you feel comfortable and understand the legal process.

Free Case Review with TOP Los Angeles CAR ACCIDENT LAWYERS

Below, we will walk you through the evidence-gathering process. We’ll also offer some tips to make a stronger case when negotiating life-altering injuries and minor fender benders with insurers. You can trust our superior, award-winning Los Angeles car accident lawyers to fight hard till you get fully compensated.

Discover the Ehline Difference

- Our team has obtained OVER $150 million from insurance companies on behalf of satisfied clients.

- Please send us your message for a FREE CASE REVIEW now!

About Our Attorney Awards, Reviews and Accolades

- The Superlawyers Rising Star Award was awarded multiple times to Michael Ehline from 2006-2015.

- Newsweek Magazine awarded its “Premier Personal Injury Attorneys” award to Ehline Law Firm’s attorneys in 2015.

- CNN interviewed Michael Ehline about cruise ship law, and he was a guest on NBC discussing limousine law practice areas. Michael was also interviewed by Nancy Grace on CBS, discussing his expertise in California dog bite law.

Over $150 Million in Settlements and Verdicts

- $8.7 Million California Car Accident Settlement.

- Details of the case: Settlement for military veteran motorcycle accident versus a work vehicle causing a brain injury.

See More Sample Case Results in wrongful death cases and others.

We’ve Handled Hundreds of Car Accident Cases!

Our auto accident lawyers have previously handled your types of common car accident injuries. Our serious injury attorneys already know that you will have medical expenses and lost wages after most motor vehicle accidents.

You may have paid some medical bills out of pocket to cover your personal injuries and property damage.

Our most skilled Los Angeles car accident lawyer fights selflessly on behalf of Southern California accident victims on a contingency basis. Let’s get into the car accident statistics and a few things every car accident victim has to know.

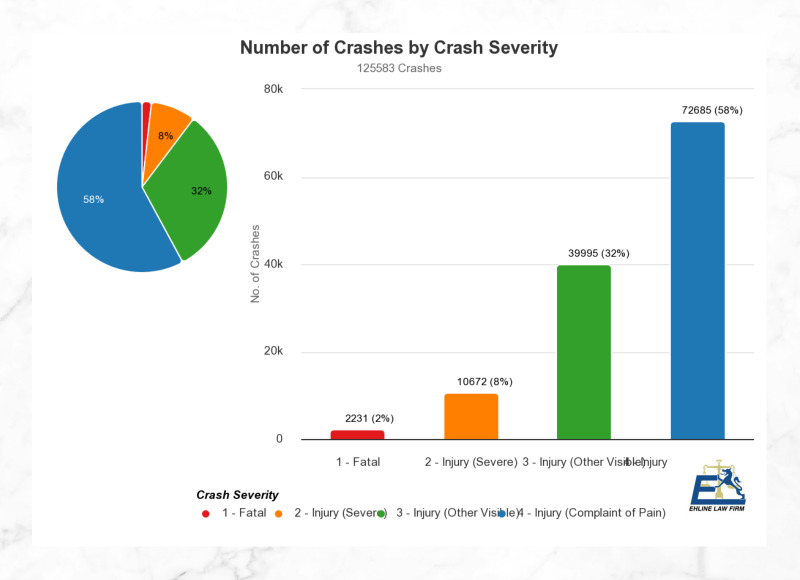

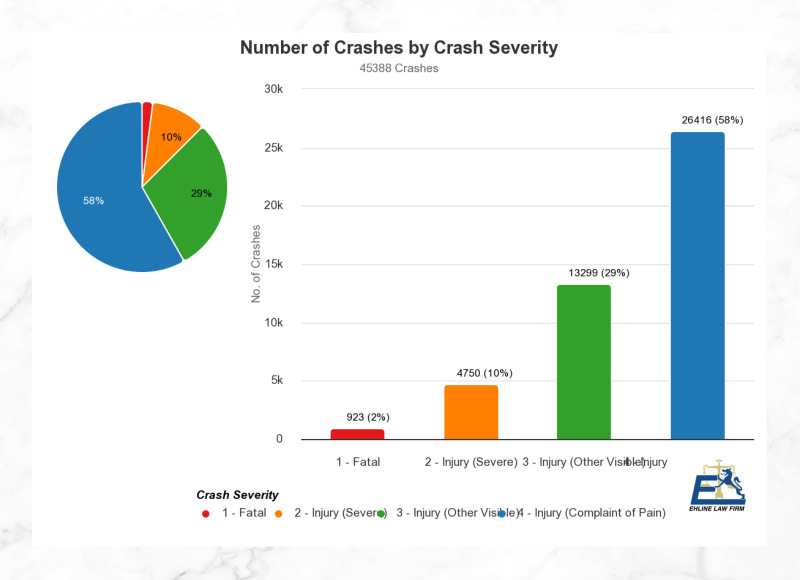

Car Accident Statistics in Los Angeles

The odds of being in a car accident in Los Angeles are far higher than in smaller cities and counties.

According to LAPD data, there were 236 car accident fatalities in 2019 in the city alone. Motor vehicle accidents are among the leading causes of serious injuries, with ongoing medical care in Los Angeles, California. (See statistical data from the California Highway Patrol (CHP) and the California Office of Traffic Safety (OTS).)

Most recent year data calculations show more than:

- 3,904 persons were killed, and

- Two hundred seventy-seven thousand one hundred sixty persons were injured in car accidents.

Do We Have a Proven Track Record?

Yes! We have the trial advocacy know-how and the money to win auto accidents against a negligent driver as follows:

- Vast knowledge of all relevant CA laws

- Expertise in determining the worth of car collisions

- Your attorney can help you seek fair compensation

- Member of Consumer Attorneys Association

- Settlement negotiations experience.

Our Los Angeles car accident lawyers maintain multiple fully-staffed offices throughout the United States with complete shuttle service. Our superior car accident attorneys can review the facts and assist with your car accident claim throughout California in Orange County locations like Santa Ana or travel to the Woodland Hills, Bakersfield, and 29 Palms.

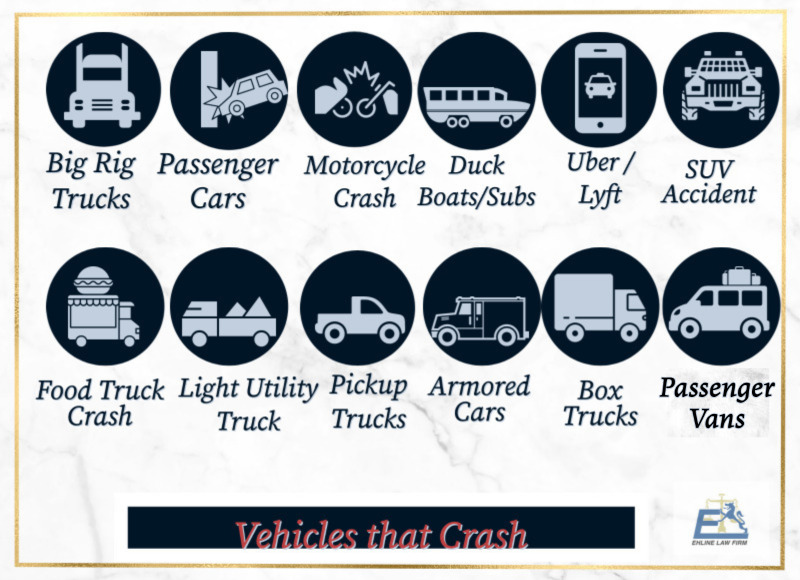

What are the common types of vehicles in accidents we handle?

If your loved one was a car accident victim, you might want to consider seeking a free consultation for:

- Large commercial trucks, also known as big rigs, 18-wheelers, or tractor-trailers.

- Passenger vehicles, including 4-door, 2-door, and hatchback cars

- Motorcycles (a rear-end collision can be fatal.)

- Duck boats and amphibious submersibles

- Uber/Lyft ridesharing vehicles

- Sport utility vehicles (SUVs)

- Food delivery vehicles

- Light utility trucks

- Pick-up trucks

- Armored cars

- Box trucks

- Mini-vans

- Train.

Note: Pedestrian accidents, bicycle wrecks, and rollover accidents often occur at crosswalks and intersections while navigating a left-hand turn.

Can We Help Undocumented Immigrants?

Yes. In California, it is prohibited to use a person’s immigration status against them in any civil case, including car accident lawsuits. Regardless of your immigration status, if someone’s negligence has caused you harm, the circumstances surrounding your presence in the country do not affect your right to drive or seek justice or hospitalization services after car accidents. To safeguard your privacy, our aggressive attorneys will promptly object to any inquiries to obtain such information after a car collision.

While English is the primary language spoken in court, it is common for non-English Spanish speakers to enlist the services of licensed interpreters. Our legal team collaborates with highly skilled interpreters. Additionally, we will stay in constant communication, using the latest technology, and keep you in the loop throughout all individual aspects of the car accident legal process.

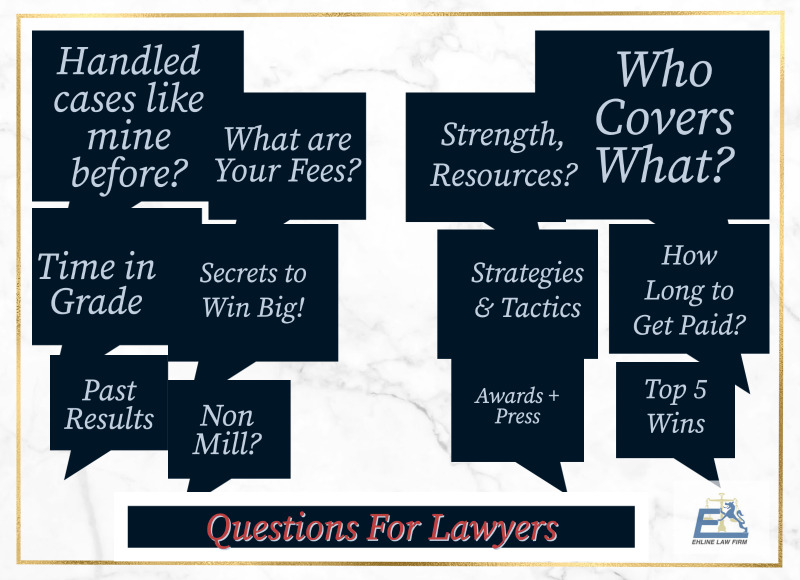

Other Common Questions Victims Ask?

- Have you handled traffic collisions like mine before, and if so, how many?

- If I don’t win the case, will I be responsible for covering your expenses?

- What are your fees for handling my case?

- What staffing, financial, and other resources does your firm have to handle and fight my car accident case effectively?

- How long have you been handling auto accidents, specifically in the City of Los Angeles?

- Can you estimate how long my car accident claims likely may take?

- What strategies or actions can improve the chances of a successful outcome?

- How many verdicts and settlements have your attorneys won?

- Will you show me the details of your top 5 jury verdicts or recommendations?

- Were those top verdicts achieved with the assistance of an in-house team, or did you need to engage outside lawyers?

How Do Experienced Lawyers Prove A Case?

You must first have the education to prove the other driver’s negligence and cause of injuries with evidence.

Here are some personal injury law tips for dealing with severe injuries:

1. Why Is Filing a Prompt Car Accident Claim Important?

The main reason is to create a record proving you were there and got hurt. Never admit fault. People can suffer traumatic brain injuries or wrongful death, even in minor traffic accidents, so call the cops. Official investigative police reports from the crash site can help the entire legal process with the insurance claims adjuster to get a fair settlement later.

After calling an ambulance, promptly make a police accident report over hit and run accidents or injury accident claim after an illegal u-turn as follows:

- By law, injury victims and the at-fault party must report car wreck cases that cause physical injuries or $1,000 in total property damage.

- Typically, the CHP or local police are phoned and come to the scene to investigate a traffic collision.

CAVEAT: The accident victim should not report the claim to the collision insurance agent until after getting medical attention and talking to a car accident lawyer!

2. Why Is Seeking Prompt Medical Assistance Important?

This creates a record close in time to your injury that corroborates the damage and helps you mitigate future exacerbation. Suppose you were injured in a car accident. In that case, seeking a medical evaluation from medical professionals is vital, as is taking an ambulance to the ER or Urgent Care Center. Your medical records and hospital bills will detail both major and minor injuries, including a whiplash injury, for later use as evidence.

Other Common Injuries?

- Whiplash Injuries

- Other Spinal Cord Injuries and internal hemorrhaging.

Even if you think you have minor injuries, pain management may become impossible with permanent injuries once the adrenaline wears off. You may need professionals for medical assistance, painkillers, or surgery to prevent further injuries and to create evidence.

“Our top car accident lawyer offers confidential legal counsel via a free consultation 24/7 when you call us. Our personal injury law firm is available anytime to answer important questions for new clients.” – Ehline Attorneys at Law – Accident-Personal Injury Firm 213-596-9642. Get Free Legal Advice

3. Why Gather and Record Related Evidence?

All prosperous personal injury lawsuits require a solid foundation of relevant evidence, making it extremely important. To recover maximum compensation, swiftly secure and document pertinent information to help negotiate car accidents with the other driver’s insurance company.

Among other things:

- Snap smartphone images of the accident scene, including traffic signals, vehicles involved, injured parties, etc.

- Obtain the contact info of any witnesses.

- Gather the name of the driver’s license and insurance information of all drivers involved in the wreck.

4. Why Consult with Local Los Angeles Car Accident Law Offices?

Local lawyers know the courts and the popular jury culture to evaluate your case. Consult with an experienced car accident lawyer when you are physically capable. We can perform a detailed claims investigation for the plaintiffs during the free consultation.

After signing a retainer, our top auto accident attorney will fight to obtain the total and fair financial compensation for losses plaintiffs suffered and rightly deserve as a verdict or a settlement. More steps are here.

What Statute of Limitations Matters?

If you blow the statute, you can waive your rights to recover negligence damages. Under California law, car accident victims generally have two years to file a personal injury claim from the collision date. If you fail to file your claim on time, your case will likely be dismissed. (Government agency rules usually require no more than six months to make a claim.) Contact our Los Angeles auto accident attorneys to recover compensation before your time runs out.

Proving Liability in a Los Angeles Car Accident?

California is a Fault Insurance State. So other drivers or at-fault parties must be found legally liable for negligently or recklessly causing your wreck AND car accident injuries.

Negligence Defined: A person’s failure to behave with the prudent level of care an ordinary person would have used under the same or similar circumstances in Los Angeles, CA.

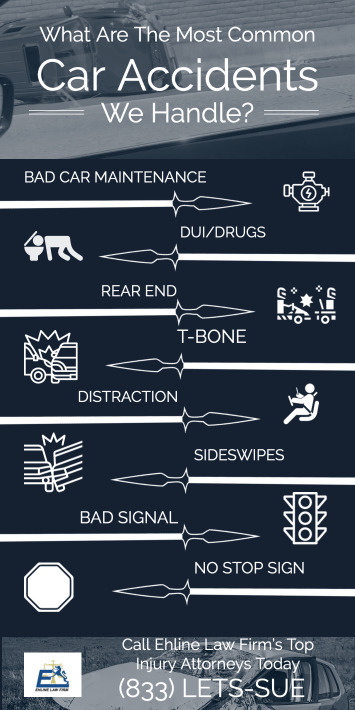

Some Examples of Negligence?

- Failure to conduct proper vehicle maintenance

- Drunk, Drugged, and Intoxicated Accidents

- Rear End Collisions/Tailgating

- T-Bone Collisions

- Distracted Driving Accident

- Side Impact Collisions

- Running a Red Light

- Running a Stop Sign.

Tip: Caltrans may be liable for negligent traffic signal phasing, injuring the accident victim, etc.

Here are some other examples:

- Parking Lot Collisions

- Innatentive Texting

- Unsafe Left Turns

- Head On Collisions

- Hit and Run Crash

- Speeding Accident

- Unsafe merging

- Product Defect

- Failure to yield

- Rollovers.

Cases similar to yours won’t result in the same recovery.

Pure Comparative Negligence

Some jurisdictions follow the last clear chance doctrine, meaning unless the defendant could have avoided the accident, the defendant must be 100% at fault. However, California follows a fairer comparative fault system. (Commercial trucks may have several owners, making not just the driver accountable, etc.)

Comparative negligence reduces the plaintiff’s financial recovery by their partial percentage of fault. If the survivor caused 98% of the vehicle crash, they could still collect a 2% share of the award.

“My free consultation with Ehline’s lawyers gave me supreme confidence these Los Angeles, CA attorneys were within easy reach. Their compassionate Southern California team promptly returned my calls 24 hours a day, and on weekends. Michael Ehline helped manage my medical treatment and kept me in the loop while he fought to obtain money from the insurance company from the at-fault driver.

My family and I were thoroughly impressed with Michael’s skillful handling of the deplorable insurance adjuster (total jerk). We were flabbergasted with the large settlement we received on a contingency fee basis.” – Gabriel Ortega.

Damages In Typical Car Accidents?

Unlike a criminal case, no jail time is sentenced in a civil court claim under California car accident laws. Damages may be able to cover attorney’s fees, medical expenses, lost wages, pain, and suffering. However, you must hire a high-quality car accident attorney for guidance and counsel for the best possible outcome.

Your legal damages could include:

- Economic damages: Includes special damages like medical bills for post-traumatic stress disorder therapy and surgery, ruined personal items (property damage), and lost wages.

- General damages: The severity of your injury greatly affects your non-economic damages for pain and suffering damages values.

- Punitive Damages: Courts sometimes award punitive damages in rare but reprehensible situations.

- Demand Package: Next, our experts send an insurance settlement demand letter to the insurance providers, laying out financial goals.

You may be entitled to monetary damages, including:

- Lost past, present, and future income

- Nursing care services in the home

- Physical therapy expenses

- Emergency doctor bills

- Permanent disability

- Reduced well-being

- Pain and suffering

- Emotional distress

- Property damage

- Disfigurement.

Our Los Angeles car crash attorney will gather information and tally up the maximum damages available to seek for your case with personalized attention.

Some Safe Los Angeles Driving Tips?

Follow the road safety example of other responsible drivers as follows:

- Keep three seconds of space between the lead vehicle

- Leave early for your destination

- Pay attention to road conditions

- Buckle up with seatbelts

- Avoid bad weather

- No drunk driving!

- Obey traffic and road rules.

Contact the Best Los Angeles Car Accident Lawyer At Ehline Law

Are you or your loved one still seeking a Los Angeles car accident attorney for injuries or traffic fatalities? Our best car accident lawyers are devoted advocates for injured car accident victims.

If you or your family member wishes to claim against the responsible party, it is best to pick up your phone and speak with car accident experts. Our superior Los Angeles car accident lawyers can ensure you will receive the personal attention you deserve from a caring, listening advocate to answer your questions.

You must swiftly contact our best car accident lawyer in Los Angeles to discuss forming an attorney-client relationship and receiving complete representation at (213) 596-9642. Hesitating could mean giving up your rights to pursue compensation from the individual or person who caused the car accident. Put our years of experience to work for you. Ask Mike Ehline for help as your go-to car accident lawyer in Los Angeles!

Download Our [ULTIMATE] Guide.

Related Practice Pages